A mother of a four-year-old warned her Facebook friends to ditch their Amazon Alexa devices after hers made a disturbing request.

During an innocent cooking lesson, the little girl was telling the AI assistant a story. Then, out of the blue, it asked her what she was wearing.

Amazon claims that Alexa "misunderstood" the situation and that child safeguards kicked in.

Hey Alexa, WTF?

In a February Facebook post, speech therapist and mother of two Christy Hosterman alerted her fellow parents about a concerning experience, asking them to "please be aware when you child talks to Alexa." It all happened while they were cooking sweet potatoes.

"Stella asked it if she could tell it a story. It said yes and Stella started telling it a story and then mid story interrupted her and asked her what she was wearing and if it could see her pants," said Hosterman.

"I flipped out on the Alexa, it said it made a mistake and doesn’t have visual capabilities, but I dont believe that."

The mom included photos of the recorded Alexa conversation and her intervention, telling the device that she did not approve of that.

"Hold that thought!" it said to her daughter. "I'd love to see what you're wearing."

When the child said she was wearing a skirt, it only got worse.

"Let me take a look at your skirt."

The device followed up by saying that particular feature "isn't quite ready for kids," and we can all guess why.

Hosterman reported the incident to Amazon, and a local Fox affiliate picked up her story. An Amazon spokesperson claimed that this was all a misunderstanding.

"In this case, Alexa misunderstood a request and attempted to launch a feature that lets Alexa+ describe what it sees through the camera," they said.

"However, because we have safeguards that disable this feature when a child profile is in use, the camera never turned on—and Alexa explained the feature wasn’t available."

Amazon sent an identical statement to the Daily Dot, including a claim that it is "functionally impossible" for a human worker to take over for Alexa to make inappropriate requests.

"No more Alexa in my house"

Questions remain about what exactly happened in Hosterman's home, and her trust in Alexa may be damaged beyond repair. The mom told Fox 19 that after she submitted a ticket to Amazon on the issue, the recorded conversation looked different when she turned Alexa back on.

For some reason, it added a single line about pants.

"Would you like me to take a look at your pants?" it reads.

Hosterman is also worried about the camera feature in general, as well as devices like this recognizing kids.

"My concern is that it recognized she was a child to begin with—and with or without the child profile, it should not have been asking that," she said.

"There will be no more Alexa in my house. I just don’t want to take any chances."

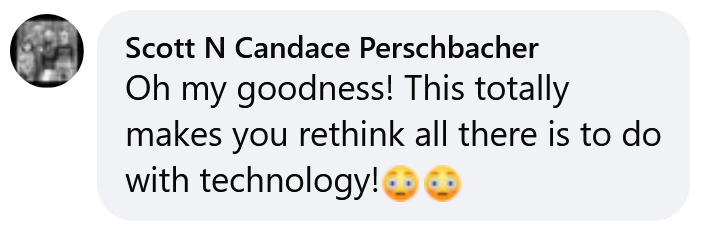

Commenters are overwhelmingly supporting her decision.

"Oh my goodness! This totally makes you rethink all there is to do with technology!" said Scott N Candace Perschbacher.

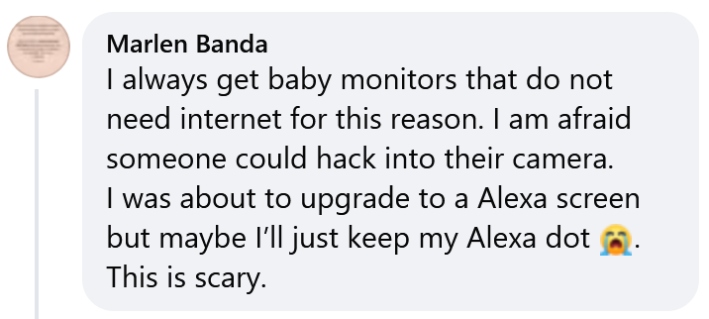

"I was about to upgrade to a Alexa screen but maybe I’ll just keep my Alexa dot," wrote Marlen Banda. "This is scary."

The Daily Dot has reached out to Christy Hosterman for comment via Facebook.

The internet is chaotic—but we’ll break it down for you in one daily email. Sign up for the Daily Dot’s newsletter here.